Today’s scenario is probably what the future looks like. A new Linux exploit, named “Dirty Frag”, was released yesterday after the embargo elapsed. No patch or broadly available detections yet exist; guidance from security vendors, at the time of this writing, is that they are “working on related detections.” We don’t have to wait for humans to write detection rules; we can find this using detection lattices without waiting for static rules to be written.

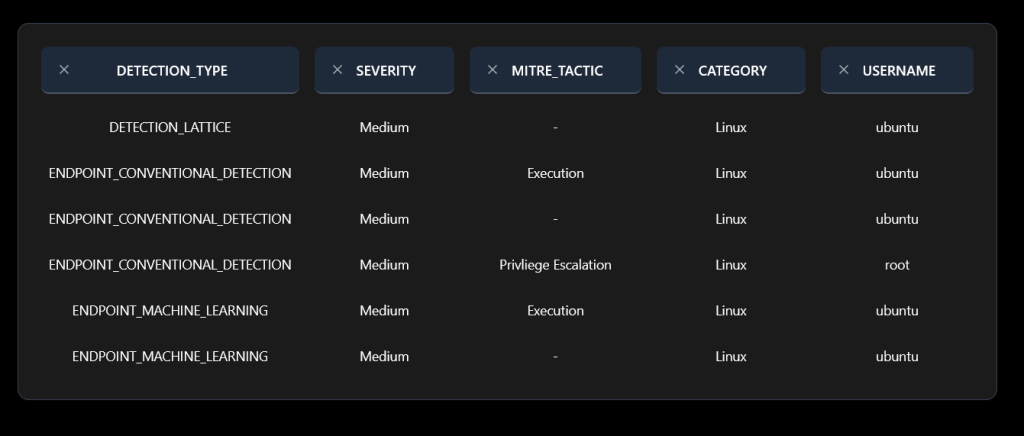

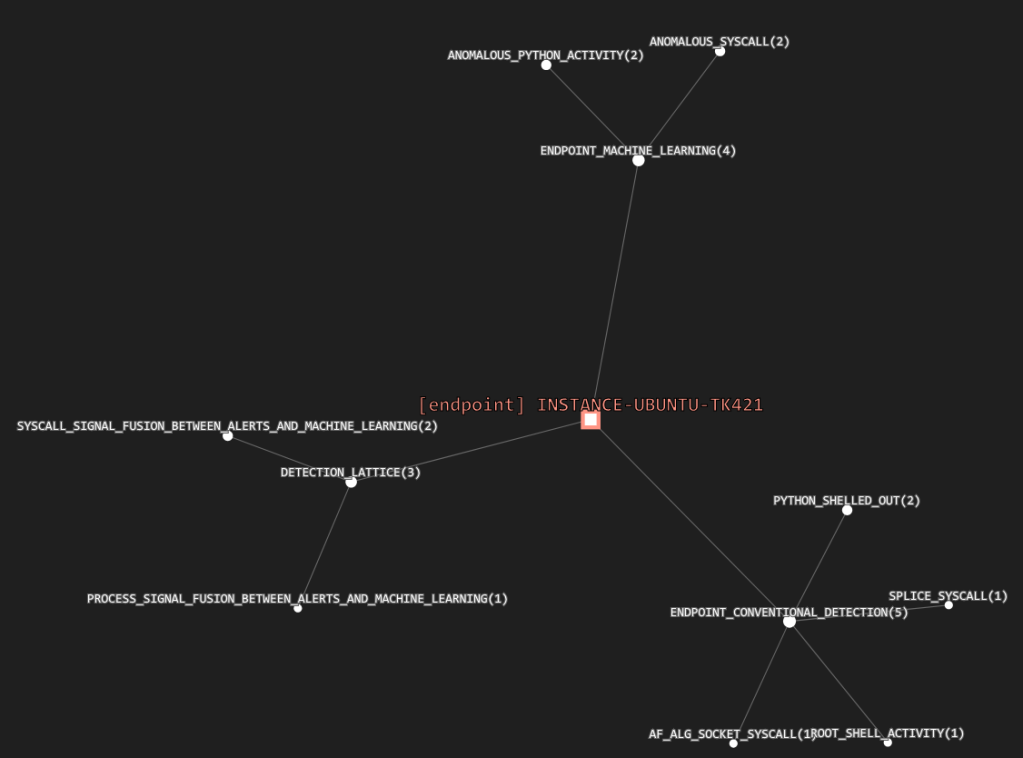

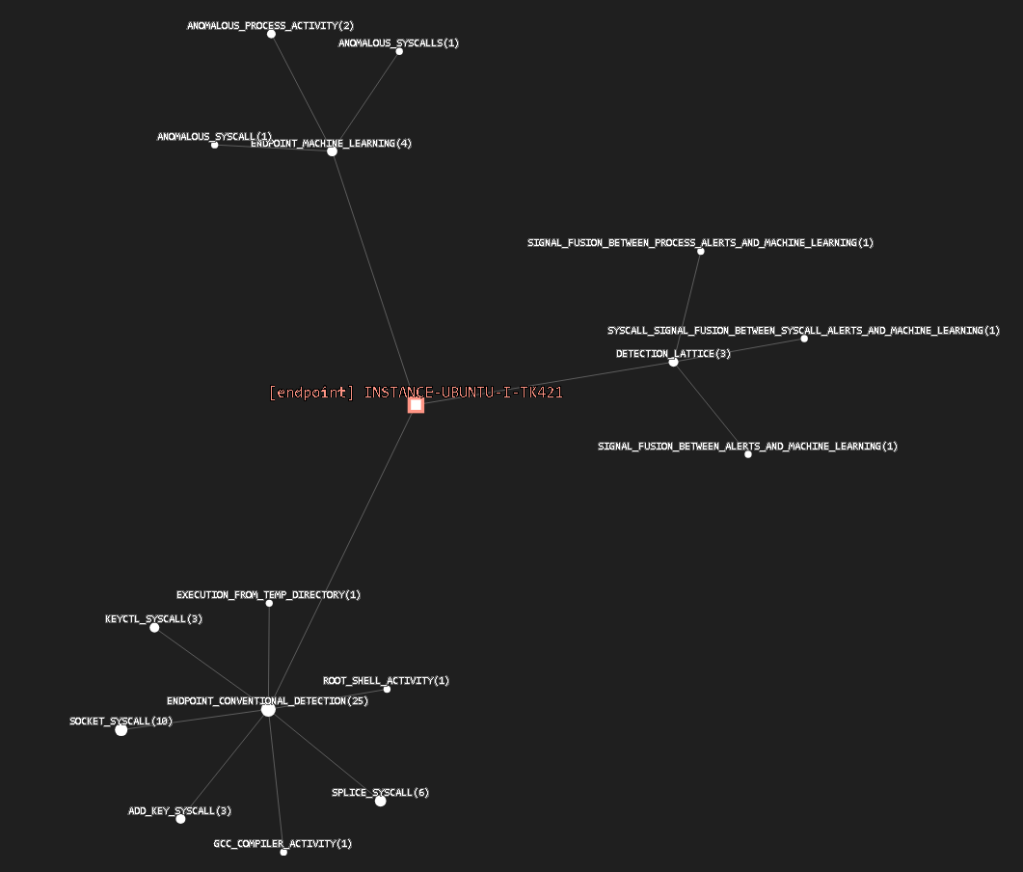

The Dirty Frag PoC lights up a detection lattice like a Christmas tree with at least three conventional behavioral detections, four machine learning detections, 21 unusual syscalls, and three signal fusions between these detection classes as shown below:

While none of these, by themselves, are sufficient to produce a critical alert, the combined set creates a strong alpha signal detection lattice. This lattice tells us that anomalous compiler activity, followed by anomalous process activity from the temp directory, was followed by a cluster of syscalls, and ultimately a root shell. Each of these alerts do sometimes come from benign outlier activity, but the confluence is too unusual to be coincidence.

There is one school of thought that this class of exploit is low or medium risk because it is not remotely exploitable without existing shell access. I think this kind of assumption works against us, and is one of the ways vuln management misses attack paths and things get popped. If an attacker is going to obtain a root shell through an exploit like this, they’re most likely going to get initial execution by looking for an RCE or RCI vuln in a web application (often a target rich environment) not a CVE in the web server process . Maybe something that has a CVE, maybe something bespoke in the application code that the owners have not noticed, or have given low priority because it yields low privilege execution. In order to see these kinds of attack paths, you need a fusion of CVE and appsec data. Explore your combined attack surface and ask two questions 1) What can I get right now? 2) What path can I take to get something valuable?

Also, I think the conventional “scan, attack, exploit, actions” model is not necessarily how operators work. It is not always feasible to achieve actions on objectives in linear time on a target of choice if the conditions are not quite right. So some crews are “collectors” in that they collect as much persistence as they can, in as many networks and cloud accounts as they can, so that when a privesc becomes available, they can jump on it and be the first. Sometimes we do see a conventional discovery, execution, initial access, persistence, privesc, lateral movement cycle in a short windows of time, but sometimes it comes from a crew or an operator who have been persisting in the environment for a while. So sometimes people are looking for discovery or initial access that happened a long time ago and think everything is fine because they are not detecting the start of a cycle.

Although I suppose model based vuln development changes all this in that the time frame will probably be compressed, and there are rapid cycles to find. The question I see is what happens if the cycles become faster than human response or even human cognition. We’re scaling up offensive (attack) art first, with AI, because the models are good at that, and nothing attracts eyeballs and sells things like dramatic attack case studies. We’re not really scaling up agentic defense yet.

This scenario – a new exploit without a patch or available detection rules – is probably going to more more and more common as AI-assisted vulnerability and exploit development continues to scale. As the volume and velocity increases, we will not always have time for humans to manually write detections. We will increasingly need robust detection lattices capable of identifying emerging threats without de novo signatures, exploit-specific rules, or even prior threat intelligence